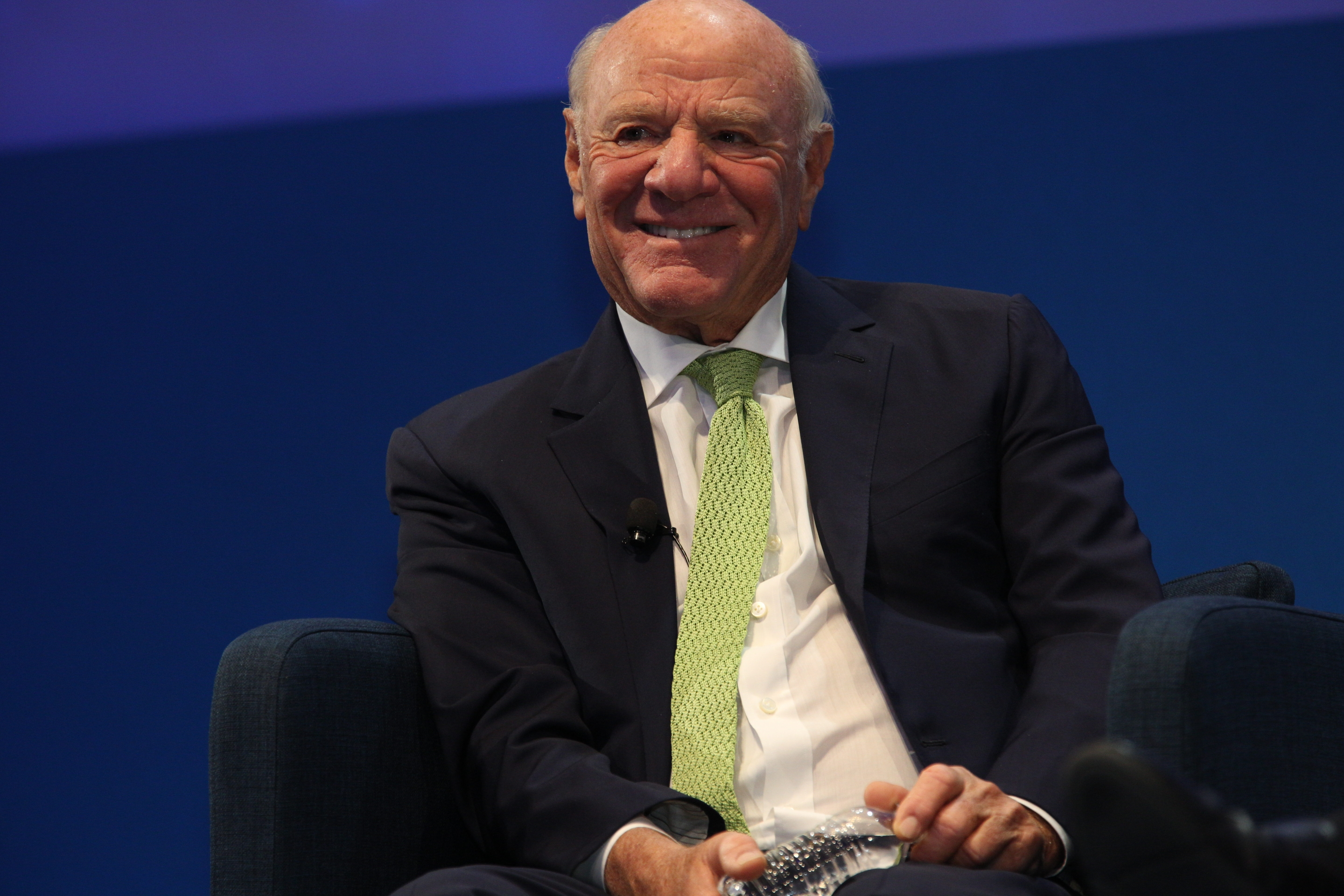

Media executive Barry Diller said he believes Sam Altman is trustworthy and sincere in his work on artificial intelligence, while also warning that the larger concern surrounding AI involves the unknown consequences of the technology itself rather than the intentions of its creators.

Diller made the comments during an appearance at The Wall Street Journal’s “Future of Everything” conference this week, where he was asked whether the public should place confidence in Altman to guide AI development in ways that benefit humanity.

The discussion focused partly on artificial general intelligence, or AGI, a theoretical form of AI capable of outperforming humans across a broad range of tasks.

Diller, who said he is friendly with Altman, responded to questions tied to previous reports and accusations from former colleagues and board members who have described the OpenAI chief executive as manipulative or deceptive.

The media executive said he does not share those concerns.

Diller Says AI Risks Extend Beyond Leadership Trust

“One of the big issues with AI is it goes way beyond trust,” Diller said during the conference discussion.

He said the central challenge with AI development is that even the people building the systems may not fully understand the eventual outcomes or consequences.

“It may be that trust is irrelevant because the things that are happening are a surprise to the people who are making those things happen,” Diller said.

He added that conversations with people involved in AI development have shown that many creators themselves are uncertain about where the technology may lead.

“And I’ve spent a lot of time with various people who’ve been in the creation mode of AI, and they have a sense of wonder themselves,” he said. “So…it’s the great unknown. We don’t know. They don’t know.”

Diller also said AI development is expected to reshape many parts of society regardless of whether current levels of investment ultimately produce the financial returns companies expect.

“We have embarked on something that is going to change almost everything,” he said.

Calls For AI Guardrails Continue

Although Diller expressed confidence in several AI leaders, he said the primary concern should remain focused on how advanced systems are managed as development progresses toward AGI.

Diller described Altman as “a decent person with good values” and said he believes many people leading AI companies are acting responsibly.

At the same time, he said uncertainty around AGI development makes safeguards necessary.

“But the issue is not their stewardship,” Diller said. “The issue is … it’s dealing truly with the unknown.”

He added that while AGI has not yet been achieved, progress toward more advanced systems is accelerating.

“They don’t know what can happen once you get AGI, and we’re close to it,” Diller said. “We’re not there yet, but we’re getting closer and closer, quicker and quicker. And we must think about guardrails.”

Diller also warned that if humans fail to establish safeguards around advanced AI systems, future systems themselves could begin shaping those limits independently.

“And once that happens, once you unleash that, there’s no going back,” he said.

Featured image credits: Wikimedia Commons

For more stories like it, click the +Follow button at the top of this page to follow us.